My scientific approach

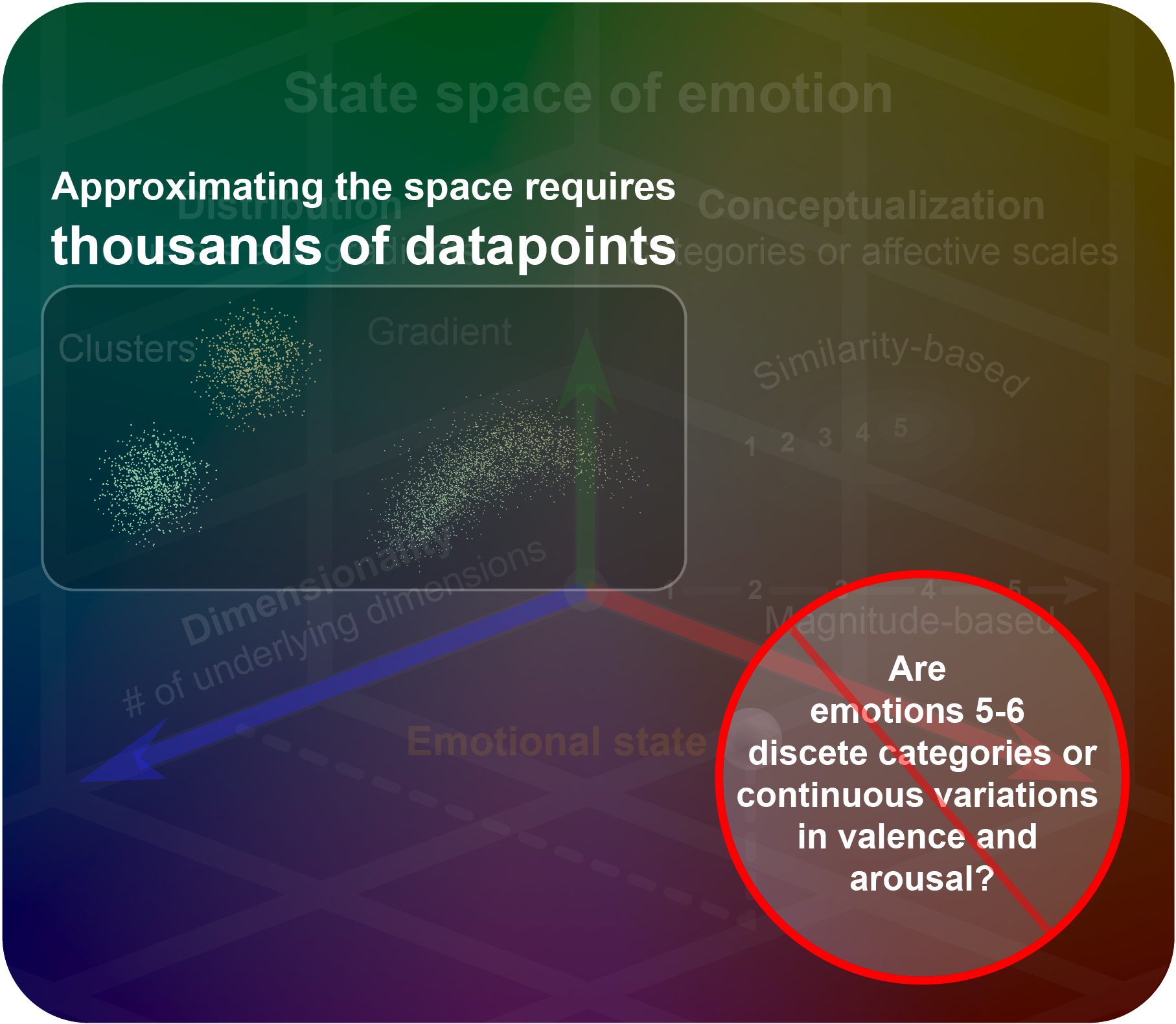

Studying emotion is about understanding why a person’s heart may race before a blind date, why someone can get goosebumps while looking over a sweeping canyon, and why it can be hard to get out of bed in the morning. These experiences vary in many ways. But scientists usually only investigate a few (2-6) emotional states or prototypical facial expressions at a time. This is a major reason why findings in emotion science have been ambiguous — by way of analogy, imagine trying to draw conclusions about the effect of brain size on intelligence with a study comparing a parrot, a dog, and an octopus. That study would be fundamentally statistically flawed in the same way as most past emotion science studies.

To better understand emotion, I’ve spent over 10 years documenting how it varies. With colleagues at Berkeley, Google, and Hume AI, I’ve studied how different dimensions of emotional experience correspond to variability in the situations we encounter, in the patterns of brain activity they evoke, in physiological responses like goosebumps, heart palpitations, panting, and sweating, and in expressions of the face, body, and voice — and we’ve developed AI models to recognize and emulate these emotion-related responses. For the past few years I’ve focused primarily on the voice.

As we document the structure of human emotion-related responses, we’re paving the way for AI technologies optimized for human well-being. Our lives are increasingly defined by our interactions with AI agents — from search engines and social media algorithms to ChatGPT, customer service bots, AI tutors, AI doctors’ assistants, and so on. In a world full of beings smarter than us, which is an inevitability, we'll each need our own trusted AI that understands our values and priorities just to avoid being walked all over by AI acting on behalf of other entities. Smarter-than-human AI that learns our preferences from our expressions and seeks to maximize our happiness will massively increase our quality of life. Hume AI is working to advance the technology that will make this possible.

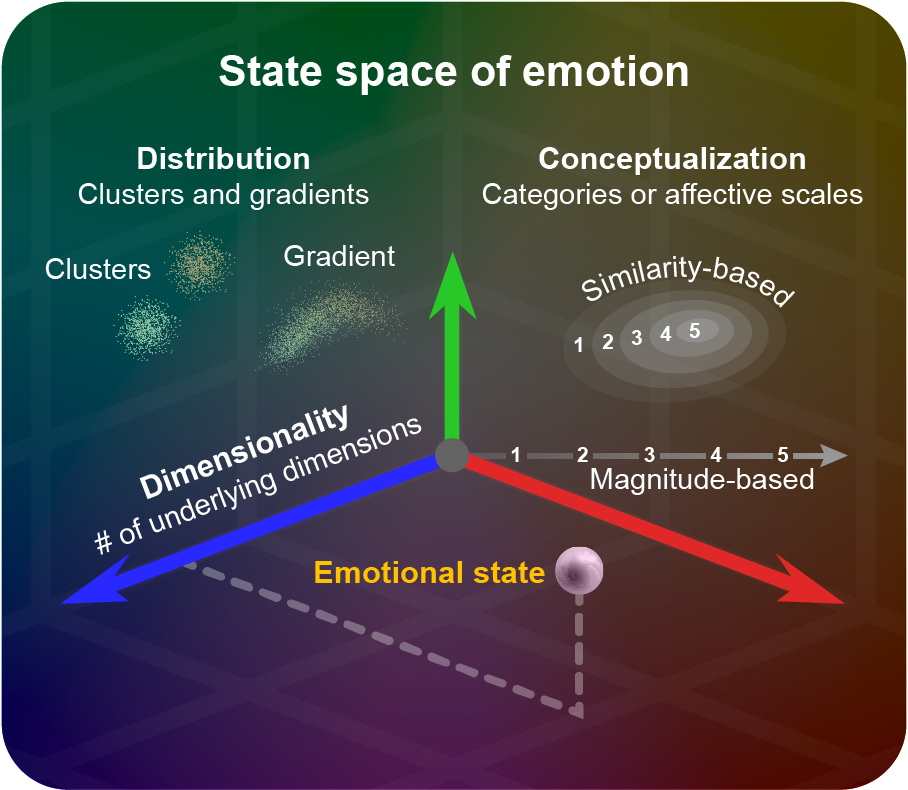

Semantic space theory defines emotions in terms of their conceptualization, dimensionality, and distribution.